Building benchmarks to guide researchers and validate AI-enabled findings

In the short time since artificial intelligence went mainstream, the world of finance has experienced huge layoffs, massive market payoffs and a giant demand for job experience that few people possess. The super quick adoption of AI across finance offers a real-time view of benefits and consequences likely to spread throughout the economy as more and more industries follow suit.

Meanwhile, that same technology just handed finance researchers the ability to explore questions that were impractical or even impossible to address with traditional techniques. Yes, AI makes work like programming or conducting statistical analyses much faster and much cheaper. But the ways AI can assist in research go way beyond handling the grunt work that humans are slow or incapable of doing.

In a working paper, UCLA’s Andrea Eisfeldt and Gregor Schubert lay out a host of generative AI applications for finance researchers. These include simulating human answers on surveys, generating new hypotheses based on findings and uncovering “sentiment” from corporate earnings calls. They encourage researchers to take advantage of this windfall, in part by providing citations of studies that deployed each technique.

Trust, But Verify

There is, however, a catch. It won’t always be clear if researchers can rely on these AI-enabled results. No one knows yet what factors might interfere with these very useful features, and incorporating AI analyses unchecked could distort a study’s findings. Determining whether that brilliant tool is producing accurate results or something potentially flawed will likely require running a lot of traditional validity tests along the way.

Eisfeldt and Schubert request that finance researchers embrace the challenge for the good of the field at large. They would like to see researchers support these methodological innovations by publishing studies of “how these tools behave in different settings, and which design choices matter for the results.” They note that, in these very early days of the technology, many researchers will be using AI for a particular purpose for the first time.

“The goal should be to eventually build up a repertory of ‘canonical’ methods and tests,” Eisfeldt and Schubert write. “Such tests would be in the spirit of the standard diagnostic tools that exist, for example, for applied econometric methods like difference-in-difference designs. These diagnostic tools will help to reassure readers and referees that the results of particular generative AI analyses can be trusted.”

Examining the Results So Far

Eisfeldt and Schubert begin the paper by describing their approach in a related working paper they released with USC’s Miao Ben Zhang and SkyHive’s Bledi Taska in the very early days of generative AI availability.

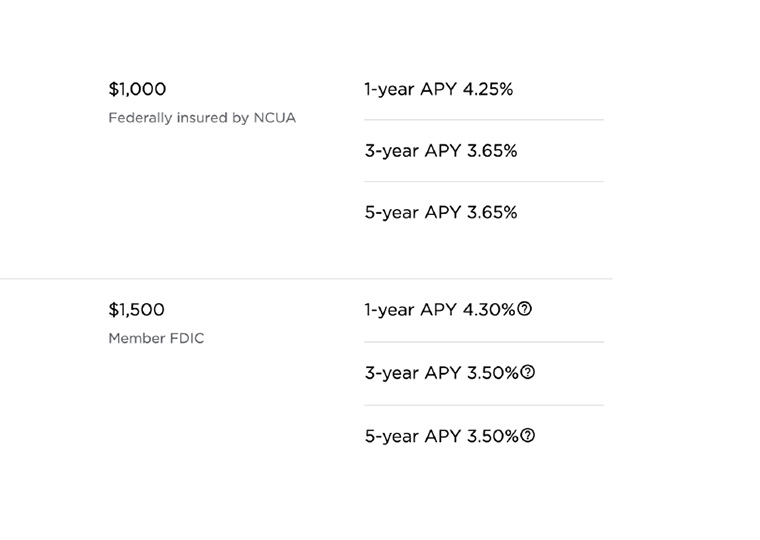

In that earlier paper, the team built and compared stock portfolios — made up of firms across all industries — of firms with high exposure to AI (doing work expected to benefit from it heavily) and those with low exposure. They also devised a new method for assessing whether AI is likely to replace the work someone in a particular occupation does or simply supplement that work.

The highly exposed portfolios outperformed the lowest by 5%, or an average 0.45% daily, in the two weeks following ChatGPT’s Nov. 30, 2022, debut. These early gains, they find, were concentrated in firms that could use AI to replace occupations. (For more on these findings, see this Review article.)

Eisfeldt and Schubert’s paper continues with a look at how a handful of AI applications have been used so far in finance research and teaching. Text classification, for example, can determine the sentiment of, say, a series of earnings conference call transcripts and other company publications. Retrieval-augmented generation eliminates the need to review thousands of documents to answer specific questions about firms or regulations. The study also offers suggestions on novel ways AI applications might be used by researchers in the future — and as this technology is still new, there is much (human) research work left to be done!

Featured Faculty

-

Andrea L. Eisfeldt

Laurence D. and Lori W. Fink Endowed Chair in Finance and Professor of Finance

-

Gregor Schubert

Assistant Professor of Finance

About the Research

Eisfeldt, A.L. & Schubert, G. (2024). AI and Finance.

Eisfeldt, A.L., Schubert, G., & Zhang, M. B. (2023). Generative AI and Firm Values.