Researchers find common warning signs in persuasion projects that went wrong

When the Netherlands wanted to increase organ donation in 2016, the country’s lower house of Parliament passed a bill changing the way citizens gave consent to donate. Rather than sign up for the program, as they had before, all citizens would be presumed donors at death unless they explicitly opted out.

The lawmakers were surprised when this worked badly.

The change the bill proposed — making the desired choice the default option — is a tried-and-true tactic used by governments, employers and marketers hoping to influence individual decisions. A nudge, such as a do-nothing option when every other possibility requires action, makes it easier for individuals to make decisions that align with their goals and preferences. Several European countries have nudged their way to stellar organ donation rates by assuming consent unless otherwise stated.

Opt In to the Review Monthly Email Update.

The Dutch, however, rebelled. With the new law to go into effect in 2020, the number of citizens refusing to donate broke records. An annual donor sign-up drive staged shortly after the bill passed registered almost six times as many signatures for non-donors as donors. The legislature eventually tamped down the backlash with some crucial adjustments to the bill. But the implications for people in the business of this sort of persuasion were troubling: A sure-fire nudge, one that had seemingly worked elsewhere, had gone rogue for the Dutch.

Even the most popular and proven nudges sometimes fail spectacularly, prompting the targeted individuals to do exactly the opposite of what the nudgers intended. These damaging anomalies — nudges that inadvertently lead to a drop in retirement savings rates or to higher energy consumption, for example — often look very much like the successful nudges documented repeatedly in workplace and government settings worldwide. What makes these seemingly reliable tactics backfire?

A recent article in Behavioral Science & Policy teases out several triggers that can make a good nudge go bad. With examples from dozens of nudge studies, UCLA Anderson’s Job Krijnen and Craig Fox, along with University of Utah’s David Tannenbaum, explain how to recognize potential nudge catastrophes, in which programs to steer people toward specific decisions might go off the rails. The authors explain their research and that of other experts in the field.

Certain choice presentations, the researchers find, inadvertently prompt decision makers to dwell on a few specific questions: What do these people want me to choose, and why? What will others think of me if I take that choice?

These internal musings can be dangerous for choice presenters, according to the paper, titled “Choice Architecture 2.0: Behavioral Policy as an Implicit Social Interaction.” Sometimes people don’t make the choice intended by the nudge.

The researchers offer a checklist to help choice presenters identify situations likely to heighten these concerns. Recognizing the red flags and making what are often small changes in the choice presentation may keep a worthy nudge from becoming a spectacular failure.

Grown-Up Nudges

In the decade since the book “Nudge” by Richard Thaler and Cass Sunstein described ways to apply insights from behavioral science research to improve the design of choice environments, nudging has become a standard tool for achieving social goals. Nudges are small, cheap and generally palatable adjustments to choice presentations, changing the layout of options or providing information about what other individuals in the same situation choose to make a particular option more popular without reducing the freedom to choose otherwise. These seemingly innocuous design changes can move mass numbers of people to make decisions that are best for the common good, or at least better for them individually.

The most familiar nudge examples are employers’ adjusting 401(k) choices in ways that encourage workers to save more toward retirement. Governments around the world nudge for things like energy conservation, higher college enrollment rates among low-income students and better adherence to tax deadlines. Marketers nudge to sell products, too, but neither the 2.0 research team nor Thaler focuses on the commercial use of nudging.

Thaler, a University of Chicago Booth economist, won the 2017 Nobel Prize in Economics for his research that started the nudge movement. Sunstein, now a legal scholar at Harvard Law School, helped the Obama administration launch nudges to further education and tax collection efforts. There are now hundreds of research studies, largely by behavioral economists and social psychologists, that essentially describe rules of thumb for effective nudging.

But the beauty of ethical nudging is that free choice never goes away. People are still free to go their own way, and sometimes nudges accidentally prompt them to do just that.

Good Nudges Gone Bad

Although nudging is now a mainstream influence tactic, getting the details right for an effective nudge is still surprisingly tricky. Even the most common and well-tested nudge techniques can backfire.

For example: Allowing employees to pre-commit a portion of future pay raises to retirement plans, and then automatically adjusting withholdings as the time arises, typically boosts participation and saving rates. Thaler and UCLA Anderson’s Shlomo Benartzi highlighted the effectiveness of this approach in a recent study, and many employers around the world have successfully adopted it.

So it was quite a surprise when four major universities adopting a similar setup saw saving rates go down. The university employees, research found, mistook the save-later option as a signal that saving for retirement wasn’t urgent.

Even financial incentives are not as consistently effective as one might expect. For example, some Swedish women (but not men) stopped donating blood when the program started paying for donations. The women may have feared their altruistic acts would be misconstrued as money grabs, according to a field study of the program.

Monitoring decisions usually is a very effective means of shifting individual behavior. Citizens were far more likely to vote when a neighborhood score card of who did and didn’t was posted. Commercial pilots improved fuel efficiency when their usage was tracked.

But while a conservation nudge reduced energy usage in many households, a distinct subset of the homes studied reported little or no change in consumption. The politically conservative among them may have interpreted the effort as promoting a liberal agenda, according to researchers.

Consumer advocacy groups thought they would help credit card users avoid excessive debt by requiring issuers to highlight on each bill the minimum payment one should make to avoid fees. But many consumers lowered their monthly payments to this highlighted figure. They had mistaken the nudge for a recommendation, according to a follow-up study.

Lessons from the Embarrassments

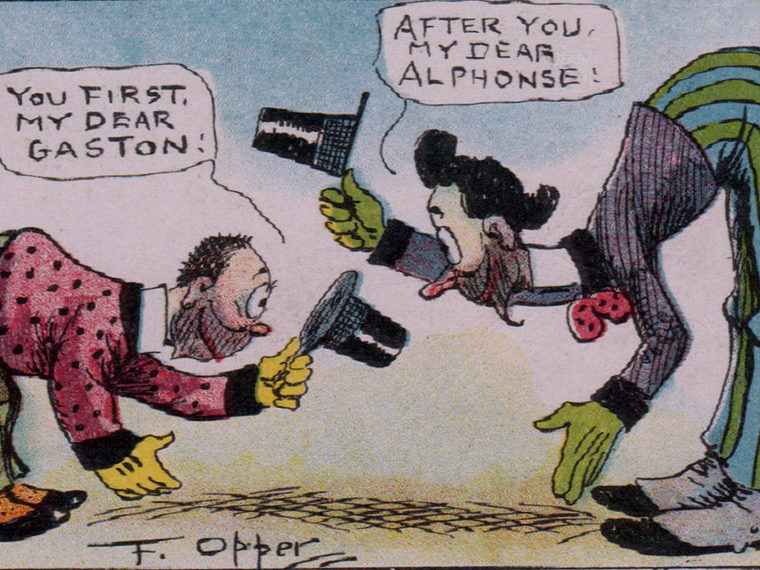

In “Choice Architecture 2.0,” Krijnen, Fox and Tannenbaum posit that each of these unintended consequences was the result of an internal debate within the subject of the nudge experiment that greatly influenced his ultimate decision. These musings, they contend, fell into two categories: those who sought clues to the nudger’s intentions, and those who considered what others would think about a particular choice. Either type of “social sensemaking,” as the authors call it, can lead to unexpected results.

Sometimes the unexpected result is a beneficial one, like when control groups adopt the behavior the experimenters are trying to achieve (a well-documented pattern called the Hawthorne effect). Doctors in the control group of a study to reduce inappropriate antibiotic prescribing made dramatic improvements — a 46 percent reduction in the number of unnecessary prescriptions. They knew they were being watched and changed their habits, possibly to gain approval.

Reactions to default nudges often are the result of social sensemaking, according to the 2.0 article. For example, employees who take the default option for retirement funds often do so because they believe a benevolent employer is recommending it, the authors note.

But nudging can backfire when the decision maker formulates conclusions about the nudger’s reasoning in his own head, regardless of whether those interpretations are correct.

Social sensemaking also makes nudging riskier when the decision maker focuses on how others will judge his or her picks, according to the researchers. Consider an Israeli day school that reasonably supposed a small fine on parents who picked up their children late might encourage punctual pick-ups. Instead, parental tardiness increased. According to one interpretation of the events, the fine allowed parents to see the pick-up as a financial transaction in which they took advantage of a cheap service. Previously, the parents had viewed tardiness as a moral violation, perhaps evidence of bad parenting.

Avoiding the Blowback

Nudging seems to get the most predictable results when decision makers simply don’t spend a lot of time worrying over the choice presenters’ intentions, or the reputational implications of their own choice. The 2.0 researchers looked for seemingly innocuous details in the choice presentations that might trigger social sensemaking. They suggest several scenarios that amplify such concerns and potentially make nudging treacherous.

- Uncertainty about which choice to pick leads decision makers to cast about for clues from the choice presenters, the authors argue. This helps explain why defaults on retirement savings are more popular among groups that have little financial knowledge, and why the presentation of choices (called menu partitioning) has a bigger impact in elections where voters have less information about the candidates, such as in local elections with little campaign activity. With the university 401(k) plan choices, researchers suspect that workers thought (consciously or not) they had found a cue about the urgency of retirement saving in the equal presentation of the choices: “save now” or “save later.” In Thaler and Benzarti’s successful setup, the option to start saving later is offered only after employees declined to enroll (or increase deductions) immediately.

- Distrust also leads to heightened focus on the nudger’s intentions. People are more likely to find a nudge offensive when they believe it’s coming from institutions that are not politically aligned with their own beliefs. A recent study by Tannenbaum, Fox and Harvard University’s Todd Rogers found resistance to nudges in those situations, regardless of whether the decision makers agreed with the policy that was being advocated.

- Importance of the matter at hand may influence decision makers to spend more time thinking about choice presenters’ intentions as well as the reputational repercussions of their own choices. Matters that decision makers consider trivial or simply uninteresting aren’t likely to get such treatment.

- Change in the choice presentations can unintentionally nudge decision makers to question intentions. People are likely to draw causal inferences, whether the nudgers intended them or not, when a situation is noticeably unusual or unexpected, according to the 2.0 researchers.

- Transparency can carry repercussions that aren’t always predictable, the authors note. Choice presenters should be alert to backlash when they explain the effects they hope to see, as governments have done when they’ve made organ donation the default option. Sometimes forthrightness about intentions works favorably for nudgers, and sometimes decision makers rebel against the overt coercion.

The Perfect Storm

With this checklist of red flags, the Dutch organ donation nudge looks particularly risky. There was an overt change in the choice presentation, complete with a lot of press explaining the intentions of the change. The subject could hardly have been more personal and, as such, was likely to be intensely important to many. Many people apparently did not trust the government to recommend the best decision for them.

The 2.0 researchers do not focus on how the Dutch, or any nudger, can fix the potential problems their prescribed audits find. The different circumstances of each project will determine the specific actions needed. The Dutch got appeasement in part by allowing individuals to put the decision to donate or not onto their surviving relatives. Time will tell if the Dutch nudge encountered only a temporary backlash and, with the adjustment, will become successful.

An audit for social sensemaking can give choice architects a heads up that a project needs more thought — or perhaps a really good pilot test — before a potentially regrettable nudge is unleashed.

Featured Faculty

-

Craig Fox

Harold Williams Chair and Professor of Management

-

Hengchen Dai

Associate Professor of Management and Organizations and Behavioral Decision Making

-

Shlomo Benartzi

Professor of Behavioral Decision Making

-

Noah J. Goldstein

Bing (’86) and Alice Liu Yang Endowed Term Chair in Teaching Excellence; Professor of Management and Organizations; Faculty Advisor, Equity, Diversity and Inclusion

About the Research

Krijnen, J. M. T., Tannenbaum, D., & Fox, C. R. (2017). Choice architecture 2.0: Behavioral policy as an implicit social interaction. Behavioral Science & Policy, 3(2), 1–18.

Beshears, J., Dai, H., Milkman, K. L., & Benartzi, S. (2017). Framing the future: The risks of pre-commitment nudges and potential of fresh start messaging.

Tannenbaum, D., Fox, C.R., & Rogers, T. (2017). On the misplaced politics of behavioral policy interventions. Nature Human Behaviour, 1(0130), 1–7. doi: 10.1038/s41562-017-0130

Meeker, D., Linder, J.A., Fox, C.R., Friedberg, M.W., Persell, S.D., Goldstein, N.J., Knight, T.K., Hay, J.W., & Doctor, J.N. (2016). Effect of behavioral interventions on inappropriate antibiotic prescribing among primary care practices: A randomized clinical trial. JAMA, 315(6), 562–570. doi:10.1001/jama.2016.0275.

Benartzi, S., Previtero A., & Thaler, R. (2011). Annuitization puzzles. Journal of Economic Perspectives, 25(4), 143–164. doi: 10.1257/jep.25.4.143